What's Wrong With Observability Pricing?

Observability Pricing: Bargain or Rip-off?

There’s something wrong with the pricing of observability services. Not just because it costs a lot – it certainly does – but also because it’s almost impossible to discern, in many cases, precisely how the costs are calculated. The service itself, the number of users, the number of sources, the analytics, the retention period, extended data retention, and the engineers on staff who maintain the whole system are all relevant factors that feed into the final expense. For some companies, it’s as much as thirty percent of their outside vendor expenses.

Observability is a $17 billion industry, and that is projected to increase by at least $5 billion within five years. More new customers are entering the market than new players, which is enough to keep prices rising despite the increased competition. But there’s more going on than mere demand driving up prices. Observability is also one of the fastest-innovating spaces in the tech world today. So, are these immense costs worth the innovations?

Spoiler alert: no.

Most consumers are overpaying for metrics, traces, and logs.

Okay, there is more nuance than that. Observability is critical. They can’t just opt out. Every tech company needs it, and many non-tech companies find value in it.

As security, compliance, and administrative needs turn digital in nearly every industry worldwide, the observability market will only expand and dig deeper into everyday business until it is a universal norm. Indispensable products often come with a premium price, sans price regulation.

Look at the average cost of smartphones over the last decade – phones have become the most essential item for anyone, even in underdeveloped regions, and “entry-level” phones now cost double what flagships were in the late 2000s.

Related Content: When Two Worlds Collide: AI and Observability Pipelines

Like smartphones, much of the price increase is justified by increased value, in observability, that comes in the form of unique services, improved efficiency, direct customer support, and ease of use. But also, like smartphones, observability can trap you in a specific ecosystem (like *cough cough* Apple), and those trapped in an ecosystem cannot quickly move out or pay a premium for no added value beyond remaining in that ecosystem. It’s probably not fair to go so far as to call such practices “predatory” since there is still value in the ecosystem and a relatively unobstructed choice between observability solutions. Still, no one competing in the observability space is going out of their way to make switching to a competing platform easier.

Pricing structures vary wildly across observability services, and it’s easier to look internally at what expenses those companies undertake to provide their services than it is to look at how they price their services externally. The costs are based on many factors: feature set, user base, data sources, and data volume.

Most observability solutions base their pricing on some combination of those factors, which makes sense because they correlate directly to the costs that their customers create for them.

The problem is that most giant observability solutions toy with their pricing schemes to appeal to a broader variety of customers, rope in small companies with apparently low costs only to double or triple them later, and upsell for “premium” features that are essential for most businesses, and carefully craft large jumps in their pricing models that correlate to the customer’s cost of switching. It’s brilliant if you’re staring at the bottom line, but it frustrates customers.

Related Content: Splashing into Data Lakes: The Reservoir of Observability

Companies all have different observability needs. A large portion of the increased competition and increased prices of observability solutions can be attributed to the fact that more companies are constructing broader platforms to service the widening breadth of use cases and then subsidizing the cost of those new features by charging more for their services overall – meaning more to firms that don’t necessarily need the same bells and whistles as everyone else.

Look at a whale like Netflix. They are primarily concerned with maintaining extensive bandwidth connections, hosting content as cheaply as possible, and moving video from their servers to customers on the most efficient paths. It isn’t essential to them if you’re loading the video from a data center across the street or on the other side of the world; they want to get the data to you as cheaply as possible from somewhere where they are keeping it as cheaply as possible without any severe slowdowns in connection speed. While still a concern internally, security is less of an issue when delivering their services. Sending The Office from one machine to another isn’t exactly a national security threat.

Whenever a company like Netflix is hacked, the worst thing is we must get a new credit card mailed to us in 3-5 business days. Observability solutions for them serve as a tool primarily for optimization.

Another massive whale you may have yet to hear of, APEX Clearing, handles market transactions for brokers like Robinhood, SoFi, and Acorns. Chances are pretty good that a financial institution you regularly engage with does business with APEX clearing, but you aren’t even aware of it. In a way, that’s a good thing. They also move massive amounts of data, and speed and efficiency are excellent, but unlike Netflix, security is a make-or-break factor for their business.

A significant hack in their system or observability solution, like the Solarwinds hack in 2020 that exposed the financial data of millions of companies and citizens, can have massive economic consequences. For the same observability solution to appeal to both types of users, the feature set must be twice as large and twice as robust. There is some overlap, but the ‘must-have’ features between customers can look wildly different.

Observability is changing fast. Firms need to innovate or acquire innovators to keep up. That leads to increased quality, cost, and feature bloat that is irrelevant to many customers. Pricing models are ultimately based on expenses incurred by observability services. Still, they are often composed of an obscure combination of factors that leaves customers needing clarification on exactly how their bills are calculated.

Observability platforms promise customers competitive pricing but usually fail to include crucial services in basic plans or levy massive fees and price increases as customers become more dependent on their services and switching becomes less feasible.

Related Content: Understanding Observability: The Key to Effective System Monitoring

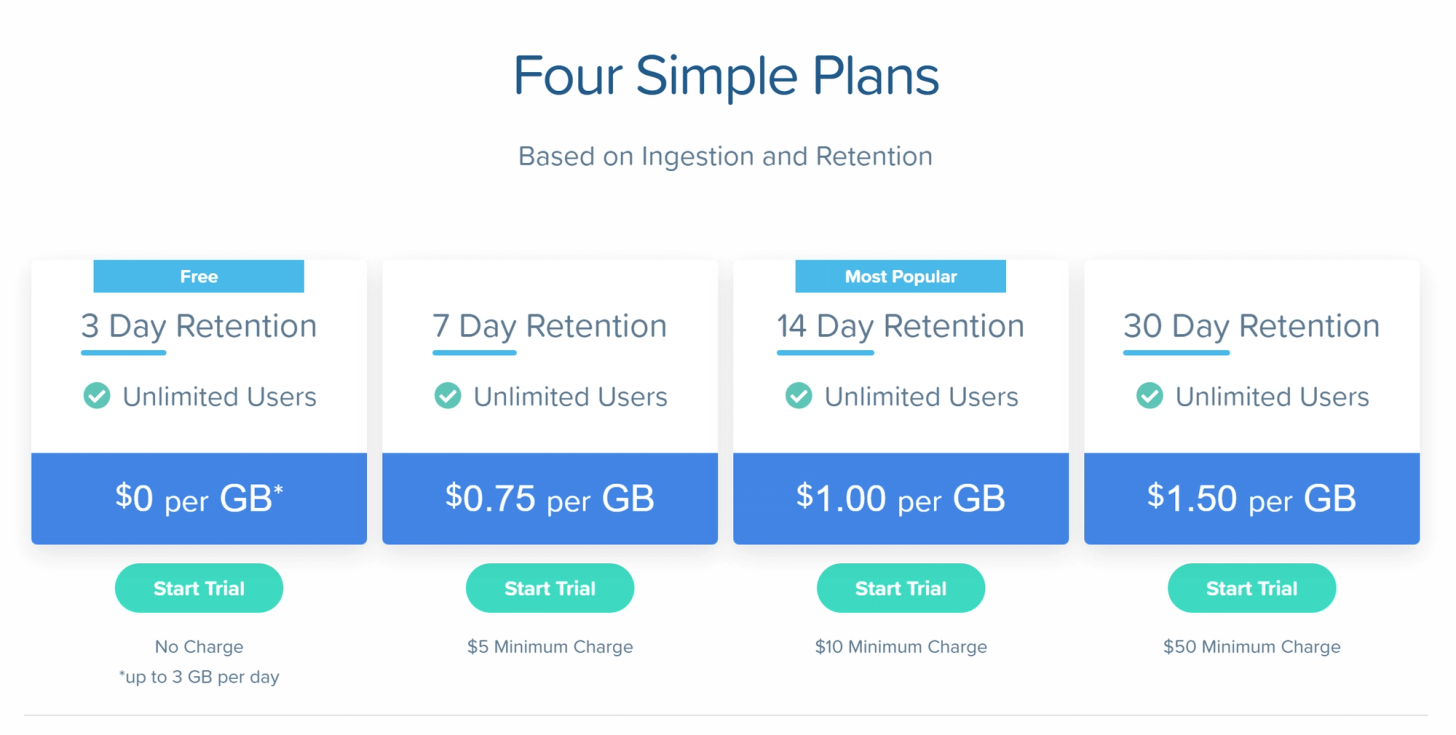

There is no clear solution. People need observability and are willing to pay a lot for it, but no one wants to spend more significantly than they have to if it is not maximizing their value. The first step to avoiding this situation as a customer is finding an observability solution that offers transparent pricing. This can be tricky. Many solutions boast pricing pages that seem easy enough to understand. Still, one of the four factors discussed above (features, users, sources, and volume) is probably hidden in the fine print as a means to trigger a massive price jump somewhere down the road. The next best solution is to find a service that charges based on only one factor. Most likely, that will be volume. Volume has the most direct correlation to cost for the providers, so providers with the most transparent pricing models charge directly based on consumption, like observIQ. A volume-based pricing model makes it easy to predict your costs, even as you scale. You may end up paying for features you don’t need, but you won’t get roped into upsells or locked into pricing jumps as you grow your business.

At observIQ, we hope to lead by example. We include all of our features in every plan. You only pay based on volume and retention. Our costs are transparent and predictable, and you never pay to add users, sources, or new features. Check us out!