Adapting to New Federal Regulations on Cybersecurity and Log Management

The Biden administration recently signed an executive order to regulate security practices among federal agencies and establishments. The decision modernizes and improves government networks to pursue fool-proof federal cyber defense. This comes after a series of malicious cyberattacks that targeted public and private entities in the past year. SolarWinds, a government contractor, was hacked in the most significant breach in US history. They exposed both federal and private companies to immeasurable data leaks, losses, and tampering or destruction. The process enhancements now mandated for event logging, log management, and observability will trickle down to the basic foundation of our country’s cyber practices. Small businesses and massive government agencies will need to make some changes. How can your business stay updated with the latest security requirements to compete in the ever-changing technical landscape?

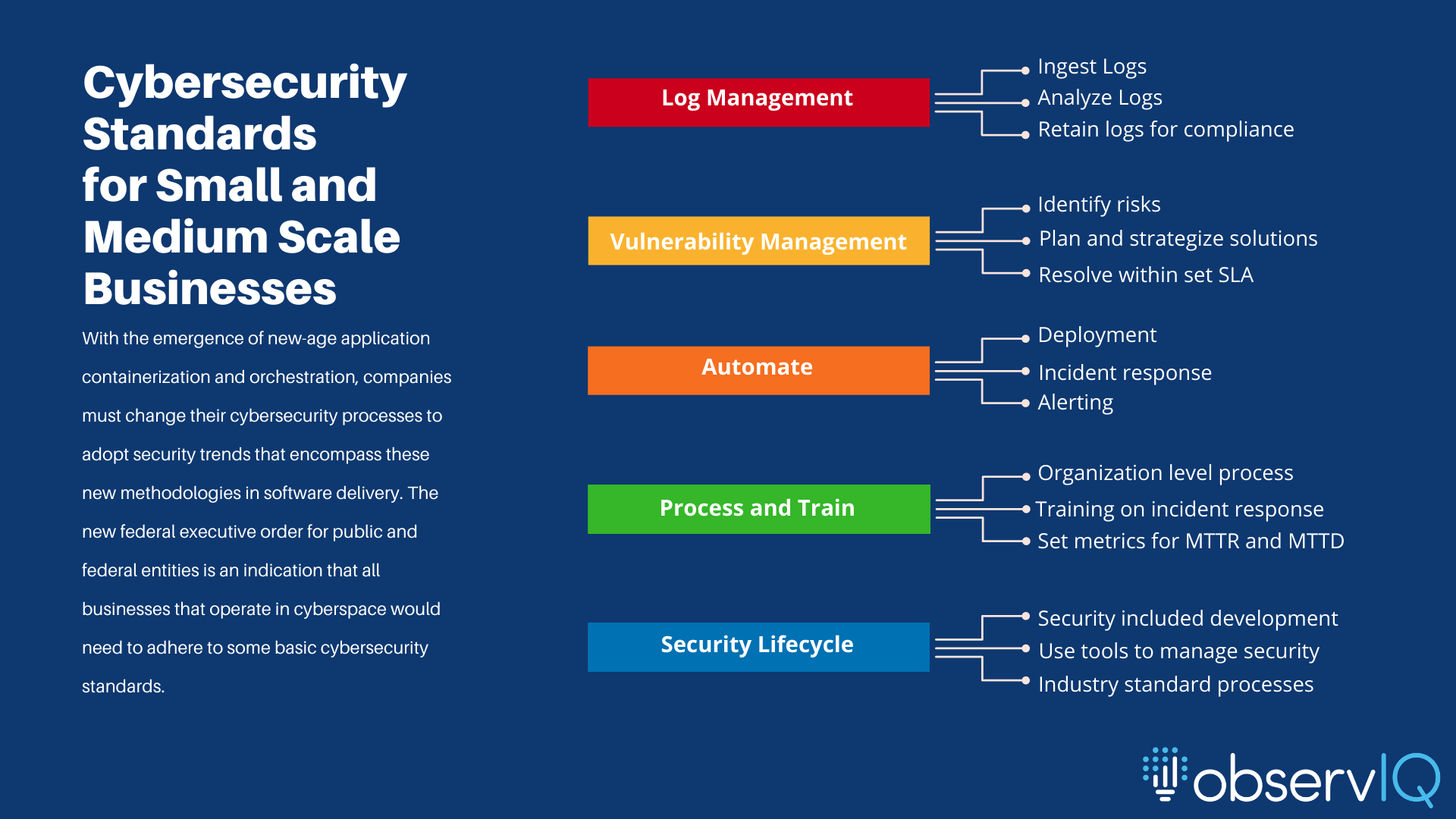

Small and medium-scale businesses must look at security as a practice and not an option:

The move to cloud-native applications has changed how IT and DevOps need to adapt and synchronize to build applications efficiently and quickly. In the constant race to stay competitive, companies have been unable to keep up with the best security practices of the current technical landscape, such as the shift from on-premises to containerized cloud applications hosted by services like Kubernetes and AWS. The solution to this unending race is to incorporate security into the software lifecycle, as we did with operations in the past. There needs to be an understanding that security policies and mandates are for verticals beyond the regulated bodies. These mandates extend to anyone operating their business in cyberspace. The expectation is to transition from the traditional build, test, and secure model to a more holistic security approach, encapsulating every aspect of software development into a safe space. An application’s security and operational readiness rely on improving the observability of the applications and the infrastructure on which they operate. Every business must continuously question how they can gather and use telemetry data for security purposes and how efficiently they can manage it across its needed lifecycle.

The challenges cloud-native applications bring to security:

If a business implements no strategy to manage its on-prem and virtual infrastructure effectively, no guarantee that transitioning to cloud-native applications will solve security challenges. With cloud-native applications, the scalability is higher. To benefit from a highly malleable infrastructure, businesses must have operational discipline and observability to gauge scaling vs. the business results they derive from scaling. It is easy to get carried away by the scalability aspect of cloud-native applications, but increased scalability brings increased risks and security concerns. The emergence of observability and OpenTelemetry puts security at the heart of cloud-native application deployment and management.

What to read from this executive order as a small or medium-scale business:

Authorized deployment pipeline: Small, agile teams still need processes. Authorized declarative pipelines bring visibility into your deployment. This executive order makes you consider setting up an authorized pipeline across your development, testing, and deployment channels. An authorized pipeline keeps all the people involved in application development and deployment aware of the steps in deployment, eliminating unforeseen human errors.

Zero Trust Architecture: “Zero trust” operates under the assumption that not all network users are friendly, requiring constant credential verification for anyone connected to any port in your application environment. With authentication automation, even in managed networks, accessing identification and authentication for individual components and multiple TLS endpoints is easier. Securing access to applications via multi-factor authentication and encrypting the data at rest and in transit is critical for maintaining a secure environment. For further reading and confirmation with set standards, look at the zero-trust architecture standards per the NIST publication(NIST 800-207).

Vulnerability Management: Incident and vulnerability management are crucial components of application and infrastructure security. As technology stacks become more vendor-diverse and modular, manual vulnerability analysis for each module of the expansive service becomes impossible. The new executive order mandates services and service providers publish their security vulnerabilities to customers. Transparency from individual service providers is the only way forward. A standard process across the board for businesses to explore, find, remediate, and react to vulnerabilities is necessary. MTTD is a crucial metric in gauging incident detection and incident response. Lowering the mean time to detection (MTTD) means periodic security audits, continuous improvements, and early security process implementation. A good MTTD would result in a lower mean time to resolution, a metric that gauges the time to resolve any security-related incident in the network. Businesses must work to bridge the gap between incident detection and resolution; for a comprehensive list of threats and other active cyberattacks, visit MITRE ATT&CK, an open platform listing all known threats that companies have reported in the recent past.

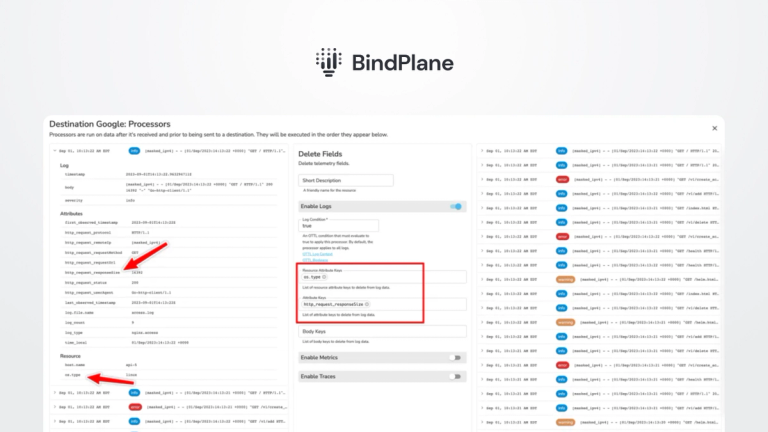

Log management: Observability and log management are at the core of security analysis. Log management is the undeniable facilitator of visibility into application and network events. A well-defined log management process helps trim your log sources to critical and non-critical components. The critical logs would be from:

- security sources such as firewalls and endpoints

- Devices controlling network traffic, such as routers and VPNs

- Authentication and identity control tools, such as user directories

- On-prem, on-cloud, or Containerized applications and virtual infrastructure

Non-critical log sources include system logs, secondary routers, switches, etc.

Many log management tools are in the market, so picking the right tool for your security and compliance is mandatory. A log management tool built with enhanced security features must offer:

- The ability to aggregate logs from all the sources you intend to observe. Having a clear idea of the sources makes it easier to know the volume of log data your applications create and how much the log management tool needs to ingest.

- Retain logs within the log management tool or use warm archiving to a retention repository for remediation and analysis.

- Opt for log management with auto-parsing capabilities. Manual parsing configurations are time-consuming and a hassle as you add more sources to your log management tool.

- Advanced alerting capabilities are a mandatory feature to look for. Alerts come in handy for incident reporting and response. The more alerting platforms the tool supports, the better.

- Visualization capabilities to create a security overview dashboard with key metrics to get a bird’s eye view of all the possible vulnerabilities and the current health of all your network security assets.

- They are reporting capabilities using advanced metrics for all application and infrastructure assets.

We hope this post is helpful to you in exploring the possibility of implementing an event log management software for your business. observIQ checks all the boxes necessary for security compliance. Try observIQ for your business for free and explore the possibilities of securing your application with the industry’s best and OpenTelemetry recommended log agent. Reach out to our support team if you have any questions. Stay observant with observIQ.